Photosynth: did someone hack my brain?

In my final year before graduating from University of Colorado (1993-4) I created a prototype software tool aiming to derive three-dimensional computer models from photographs. I received funding via two small grants from the Undergraduate Research Opportunity Program and submitted a paper for presentation at the 1994 conference of the Association for Computer Aided Design in Architecture . Though the paper was not accepted, I'm quite fond of that project even these many years later. It's the last real work I did in lisp. I very much enjoyed the geometry problems I had to solve in the process. I also enjoyed using the computer to help me visualize that geometry.

Many different things have been kicking up these memories in the past month, but one in particular compells me to write: Blaise Aguera y Arcas' Photosynth demo at TEDTalks

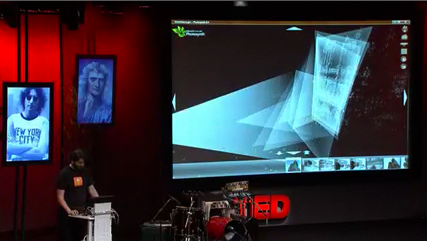

In fact, one image from that talk in particular compells me to write. (I'm showing two images here for context. The one displaying the cones of vision is my motivation for writing).

I would accuse someone of hacking my brain, except that what intrests me most about Notre Damme are the flying buttresses rather than the West facade and the rose window. It's a hauntingly beautiful model, even ghostly, made more sureal for me because it's a private memory from fourteen years ago which only escaped my own mind in verbal descriptions and quick sketches with close friends.

It's profoundly exciting to see the tool I envisioned so long ago to come into existance without the slightest effort from me and then neatly enhanced by resources at Flickr along with some certainly very cool developments in computer vision.

I've retyped the Introduction and Conclusion from my 1994 paper below. Tooting my own horn, I'm especially pleased to have this prediction of future automation in writing.

Introduction

Although three dimensional computer models serve many useful purposes their creation remains difficult especially when created from poorly documented existing objects or buildings. Generally, one must directly measure the objects to be modeled, then enter the measurements into a computer aided drafting (CAD) system with a mouse or digitizing tablet. Direct measurement is often difficult and inaccurate when the object is intricate, very small, or very large. Photogrammetry, a technique of obtaining measurements from photographs, offers an alternative to direct measurement for the generation of three dimensional models.

The purpose of this project is to create a suite of tools to derive three dimensional computer models from photographs.

Advantages of Photography

First, most contact with the object is unnecessary because on generates the model from photographs, very useful for particularly large, small, or delicate objects. Second, photography is very general. One can take pictures of buildings as easily as furniture. One could use the same tools for producing models of objects of dramatically different scales. Whereas in direct measurement, one must have different tools for different scale objects. Third, there is potential for photogrammetric techniques to be completely automated.

[snip]

Conclusion

Since its early devleopment, perspective has involved measurement. The techniques used here expand on this relationship to generate computer models from photographs. The current implementation is probably no more convenient than direct measurement, nor any less time consuming. It is also not particularly useful for organic objects. Nonetheless, it generates models entirely from photographs. No special cameras, drafting tablets, nor other specialized equipment are required, except a computer. It is equally useful for large and small scale objects. It also offers the possibility of completely automating photogrammetry, should artificial intelligence techniques become clever enough to recognize rectiliniar shapes in photographs.